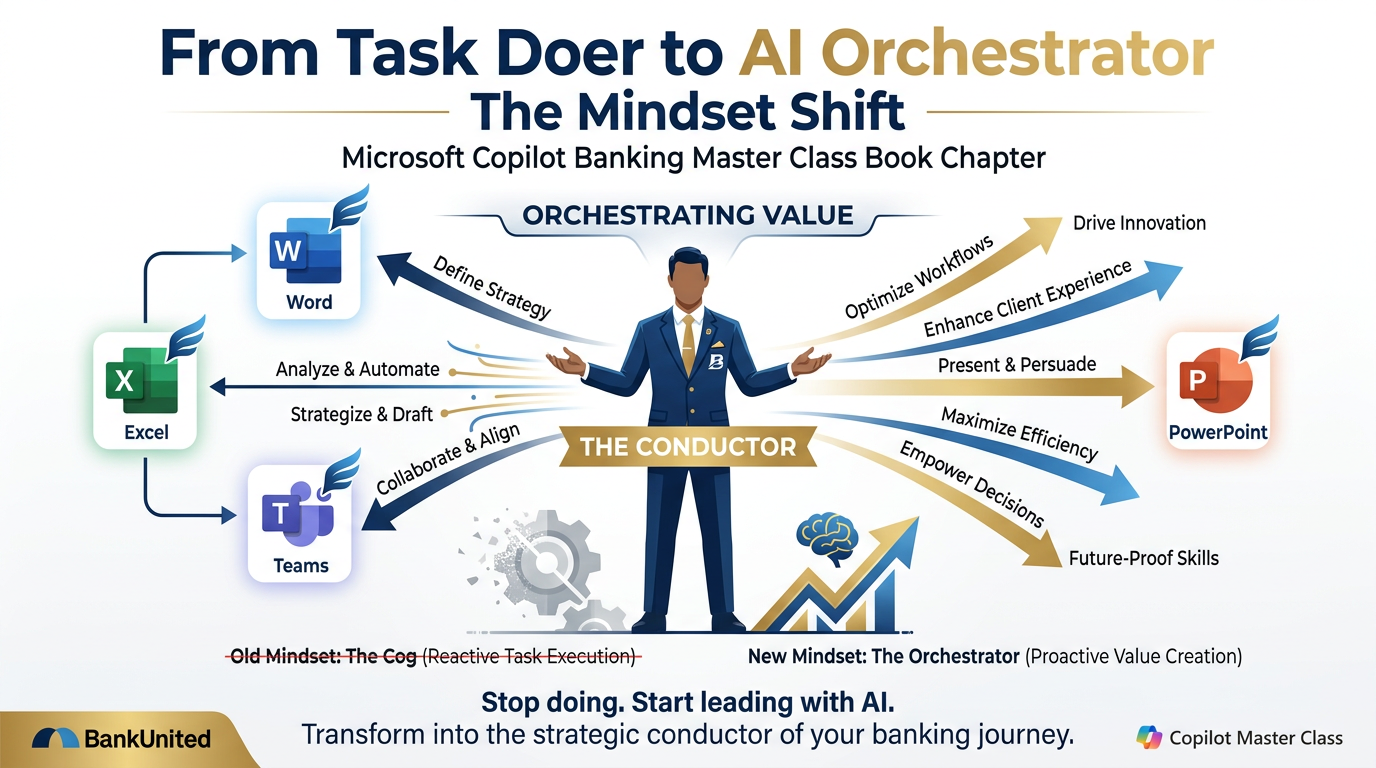

Figure 1:The shift from Task Doer to AI Orchestrator is not a technical upgrade. It is a fundamental redefinition of where your value lives.

“My value multiplies when I orchestrate AI’s capabilities with human judgment.”

Let’s begin with a confession.

Everything in Chapter 1 was true. The LLM brain, the flashlight, the context window, the persona — all of it real, all of it useful. But here is the uncomfortable truth that most AI training programs skip over: knowing the tools is not what determines who thrives in the AI era.

Knowing the tools is table stakes. Everyone will eventually know the tools.

What determines who thrives — what separates the professionals at BankUnited who become genuinely more capable from those who just add a new layer of busyness to the same old workflow — is something that happens before you open Copilot. Something that happens in the space between your ears, in the model of yourself that you carry around every day.

It is the mindset.

And this chapter is about that. Not the soft, motivational-poster version of mindset. The real version — the specific, evidence-based, psychologically grounded version. The one with data behind it, the one that explains what is actually happening when smart people get stuck, and the one that gives you a concrete path from stuck to moving.

This is the inner work. And it is arguably the most important chapter in this book.

11. The Fastest Rate of Change in Human History¶

Let us start with a fact that should stop you cold.

In 2001, futurist Ray Kurzweil made a prediction that, at the time, most people dismissed as grandiose. He said that the 21st century would see not 100 years of progress — but 20,000 years of progress, compressed. And that by roughly 2045, the rate of change in the world will be so rapid, so profound, that our ability to predict what happens next will essentially break down.

You can argue with his timeline. What you cannot argue with is the directional observation: the rate of change is accelerating, and AI is the primary engine.

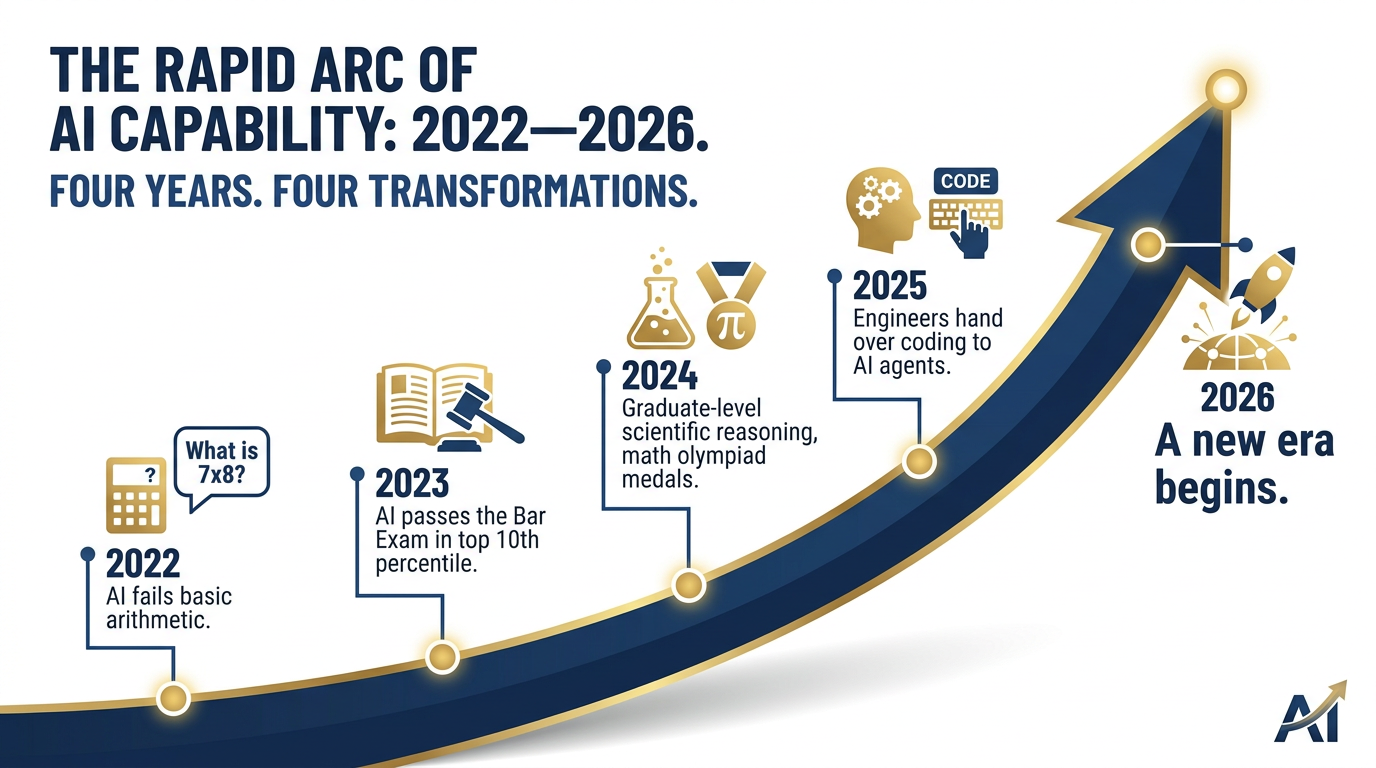

Here is a concrete arc that should make this viscerally real for you. Consider what happened to AI capability between 2022 and 2026 — a span of just four years:

Figure 2:Four years. Four transformations. The pace is not slowing — it is accelerating.

2022: AI models couldn’t reliably multiply 7 × 8. Researchers were careful to note they were “stochastic parrots” — statistically sophisticated, but not reasoning. Matt Shumer, a prominent AI researcher, was publicly documenting the failures.

2023: GPT-4 passes the bar exam — not at the bottom of the curve, but in the 90th percentile. The same year, it passes the US Medical Licensing Exam. The AI world collectively sits up straighter.

2024: AI begins tackling graduate-level scientific reasoning. Models start winning silver medals at the International Mathematical Olympiad — problems that defeat virtually all human competitors.

2025: Software engineers at major technology companies begin handing over significant portions of their codebases to AI agents. Not assistance. Handover. Some organizations report that AI is writing the majority of new production code.

February 2026: The month you are reading these words. A new era has already begun, and most people inside most organizations — including most banks — have not yet changed how they work at all.

Meanwhile, AI capabilities are doubling every six months. Not improving — doubling. And 75% of employees globally, in a recent Randstad survey, said they fear their skills will be obsolete within three to five years.

Read that again. Three to five years.

Here is what this means for BankUnited specifically: You are not adopting a tool. You are entering a new era of professional life. The question is not whether this era is coming. It is already here. The question is: who are you going to be in it?

22. The Gap Is Real. The Opportunity Is Now.¶

Before we go any further into mindset, let’s ground ourselves in the business reality. Because this is not an abstract philosophical conversation about the future of humanity. This is about BankUnited’s competitive position and your career trajectory — and both of them are at stake.

The data from the leading research institutions is unambiguous:

Companies that lead in AI adoption generate 1.7× more revenue growth than those that delay (Boston Consulting Group, 2025). Not 1.07×. Not a rounding error. 1.7×.

Despite all the attention AI has received, only 5% of companies globally qualify as “future-built” for AI. Meanwhile, 60% report minimal or no measurable value from their AI investments (BCG, 2025).

Of the companies that are seeing results, 55% achieved them by redesigning their workflows — not just bolting AI onto existing processes (McKinsey, 2025).

15% of workers over 45 currently use AI tools in their work. But 50% want training. They’re not resistant. They’re waiting for permission and support (WorkLife/Randstad, 2025).

That last point deserves a moment.

Half of the workforce is asking to learn. They are not holding back because they don’t care. They are holding back because no one has opened the door, handed them a map, and said: “This is real, this is relevant to your work, and here is how you start.”

That is exactly what this program is doing.

And here is the anecdote that captures the entire opportunity:

Sears Holdings — before its collapse — launched an internal initiative to build AI applications for their operations. Non-technical employees, with no engineering background, built over 50 internal applications. Not fifty sophisticated enterprise systems — but fifty working tools that automated real workflows and saved real time. No engineers required. No IT department approval needed for every step. Just motivated professionals with a training program and permission to build.

The point is not Sears. The point is the pattern. The organizations that win the AI era are not the ones with the best AI team. They are the ones where the most people are building.

BankUnited has 2,000+ employees. The gap between where BankUnited is today and where it could be with 2,000 AI-empowered professionals building, experimenting, and improving — that gap is the opportunity. And it starts with you.

But here is the hard truth: the gap between AI leaders and AI laggards is not, at its core, a technology gap. It is a measurement gap (we don’t track the right things), a culture gap (we don’t make it safe to experiment and fail), and above all, a permission gap (people are waiting for someone to tell them it’s okay to start).

Consider this permission granted.

33. Before You Drive the Ferrari — Fasten Your Seatbelt First¶

We are about to talk about transformation, growth, and possibility. And we will get there. But first — because this is a book for banking professionals operating in a regulated environment — we need to have the most important conversation in any responsible AI adoption program.

The safety briefing.

This is not the small print. This is not the section you skip. In banking, in a supervised institution like BankUnited, AI safety is not a compliance checkbox — it is the foundation on which all of this rests. The banks that get this wrong do not just lose a case study. They lose client trust, regulatory standing, and sometimes their operating licenses.

Figure 3:The Ferrari can go 200 mph. The seatbelt is not optional.

Here are the non-negotiables — the standing rules for every BankUnited professional using AI:

These rules are not here to slow you down. They are here to keep the program alive — and to keep BankUnited’s record of trustworthiness intact. The Newsweek recognition for trustworthiness was earned by ten thousand decisions made by people who took their responsibilities seriously. This program asks you to bring that same seriousness to your AI practice.

Now let’s talk about what’s possible.

44. The Adaptation Problem — Why Smart People Get Stuck¶

Here is something that should give you enormous relief: the resistance you feel toward AI is not a sign of weakness. It is not stupidity. It is not being “old school.” It is a deeply human response to a genuinely difficult kind of change.

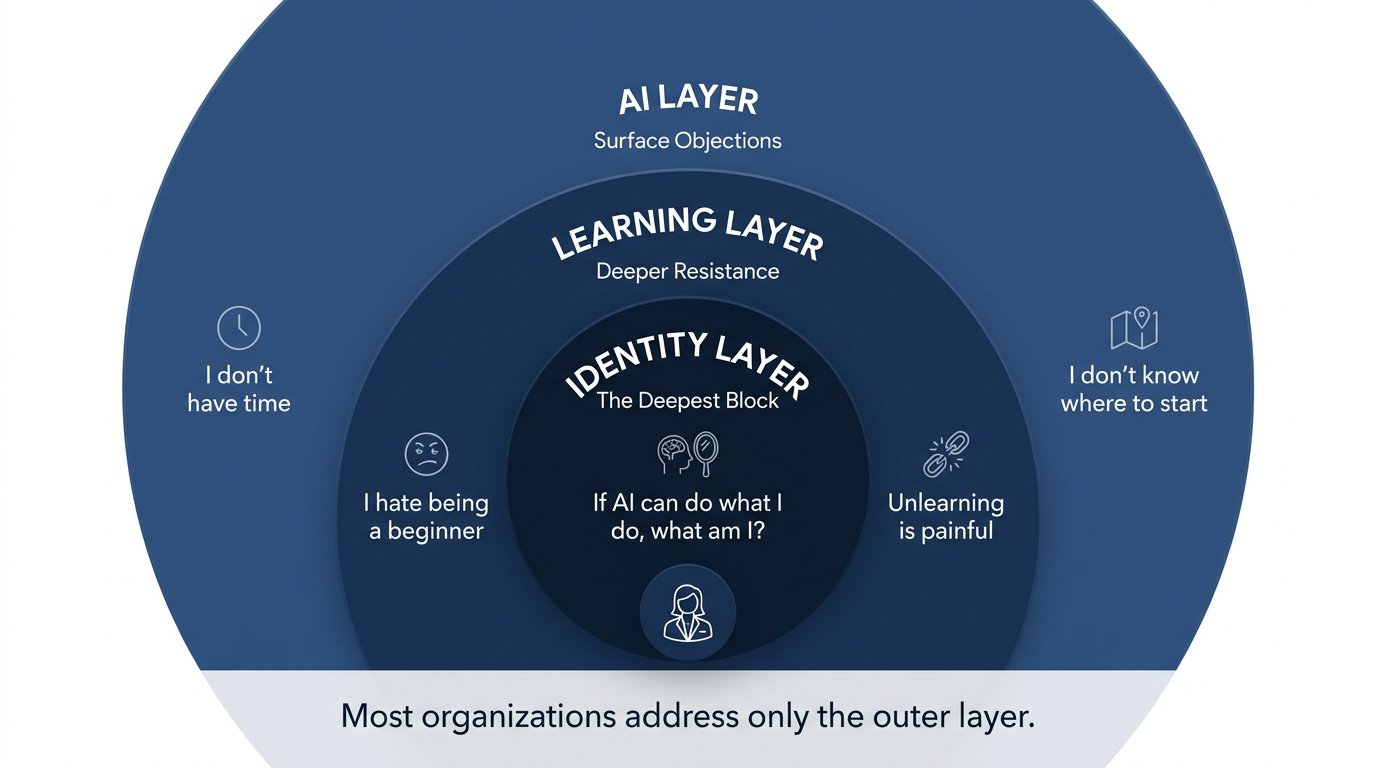

And it operates in layers.

Smart people get stuck because the obstacles are not primarily technical. They are psychological. And because most organizations treat AI adoption as a technology project rather than a human change management project, they address the wrong layer and wonder why adoption stalls.

Let’s name the layers.

Figure 4:The three layers of AI adoption resistance. Most organizations address only the outer layer. The real work is in the middle and the center.

4.1The AI Layer — The Surface Objections¶

This is the layer that organizations address most readily. It’s the layer that produces statements like:

“I don’t have time to learn this.”

“I don’t know what’s possible.”

“I don’t know where to start.”

“I tried it and got AI slop — generic, useless output.”

These are real objections. And they have real answers — most of which you will find in this book. The “don’t know where to start” problem is solved by Chapter 1 and the chapters that follow. The “I tried it and got bad output” problem is solved by context engineering and prompting technique. The “don’t have time” problem is solved by mathematics: once you can complete a task in 15 minutes that used to take two hours, the time argument inverts.

But solving the AI layer is not enough. Because most of the resistance is not at the surface.

4.2The Learning Layer — The Middle Resistance¶

Below the AI objections is a harder set of feelings:

“I’ve lost my curiosity somewhere along the way.”

“I hate being a beginner. I’ve been good at this for 15 years and I don’t want to feel incompetent again.”

“Unlearning old habits is painful. I have grooves worn in how I do things, and changing them requires more energy than I have some days.”

This is real. And it is worth honoring. If you have spent a decade building expertise in commercial underwriting, or treasury management, or relationship banking — and suddenly a tool appears that can do in 30 seconds what used to take your hard-won skill two hours to produce — there is a grief in that. A loss. Even if the net effect is entirely positive.

The psychological research on this is clear: humans experience the loss of a professional identity marker with the same emotional weight as a personal loss. Your expertise is not just what you do — it is, in many ways, who you are. And any tool that disrupts that expertise does not feel, emotionally, like a gift. It feels like a threat.

Which brings us to the layer that almost no AI training program ever addresses.

4.3The Identity Layer — The Deepest Resistance¶

Deep beneath the surface objections and the learning discomfort is the most powerful block of all:

“I am what I do. If AI can do what I do, what am I?”

This is the question that keeps people from experimenting. It is the question that makes smart, capable professionals quietly resistant to tools they intellectually know are beneficial. It is the question that shows up as cynicism in team meetings, as minimal compliance with “mandatory” AI training, and as a subtle unwillingness to commit to the new way of working even after they’ve been trained.

And it deserves a direct, honest answer.

You are not your tasks. You are your judgment. You are your relationships. You are your institutional knowledge, your cultural context, your ethical sensibility, your ability to read a room and know what a client actually needs, even when they don’t know how to ask for it. You are the person who shows up on a difficult call and says the right thing not because an algorithm predicted it but because you have lived through similar moments and earned the wisdom of them.

AI cannot replace any of that. It can only amplify it.

But here is the crucial shift: to unlock that amplification, you have to let go of the tasks. Not the judgment. The tasks. The drafting, the formatting, the summarizing, the researching, the scheduling — the cognitive labor that used to be what “being good at your job” looked like. That work is going to AI. What remains — and what gets amplified — is everything that made you good at your job in the first place, before the tasks.

The identity shift is not: “I used to be a banker and now I’m an AI user.”

The identity shift is: “I used to be a banker who spent 40% of my time on tasks. Now I am a banker who spends 100% of my time on judgment.”

That is a promotion. Not a demotion.

55. It’s Not a Productivity Crisis. It’s a Purpose Crisis.¶

Let’s look at the numbers that nobody talks about in AI adoption conversations — because they reveal what is really going on.

Only 18% of employees globally feel their job aligns with a sense of purpose (Gallup, 2025).

89% of workers fear AI will make them obsolete (Resume Now, 2025).

77% of employees say that learning new skills gives them a genuine sense of purpose (TalentLMS, 2024).

Engagement is 5.6× higher when work connects to a sense of purpose (Gallup, 2025).

Trust in employer is 144% higher when workers receive hands-on AI training — not just communication or policy — but actual, guided practice (HBR/Deloitte, 2025).

Read that last number carefully. One hundred and forty-four percent higher trust — just from receiving hands-on training. Not a slide deck about AI strategy. Not an email from the CEO about the future. Hands-on training. Practice. Someone sitting beside you and showing you how.

This is why this master class exists.

But the deeper insight in these numbers is not about AI at all. The deeper insight is this: the crisis that most organizations are mislabeling as an “AI adoption problem” is actually a purpose crisis.

People who feel their work is meaningful throw themselves at new tools. People who feel their work is soul-crushing wheel-spinning use AI adoption as another item on the list of things management is making them do.

The solution to a purpose crisis is not a better training platform. It is a reframe of what the work is — and what the professional is — in the context of AI.

The reframe is this: upskilling is not the cost of AI adoption. It is the point.

The organizations that get this right — the ones where AI adoption actually takes hold and generates the 1.7× revenue multiplier — are the ones where leaders connect AI learning to professional growth, not to operational efficiency. They say: “We are doing this because we want you to become more powerful in your role. Because we believe your judgment is valuable and AI is what lets that judgment do more.” That reframe changes everything.

BankUnited has always believed in the development of its people. The Newsweek recognition for trustworthiness was not an accident — it was the accumulated result of thousands of people who cared about their work and the clients they served. That care is the raw material. AI is the amplifier.

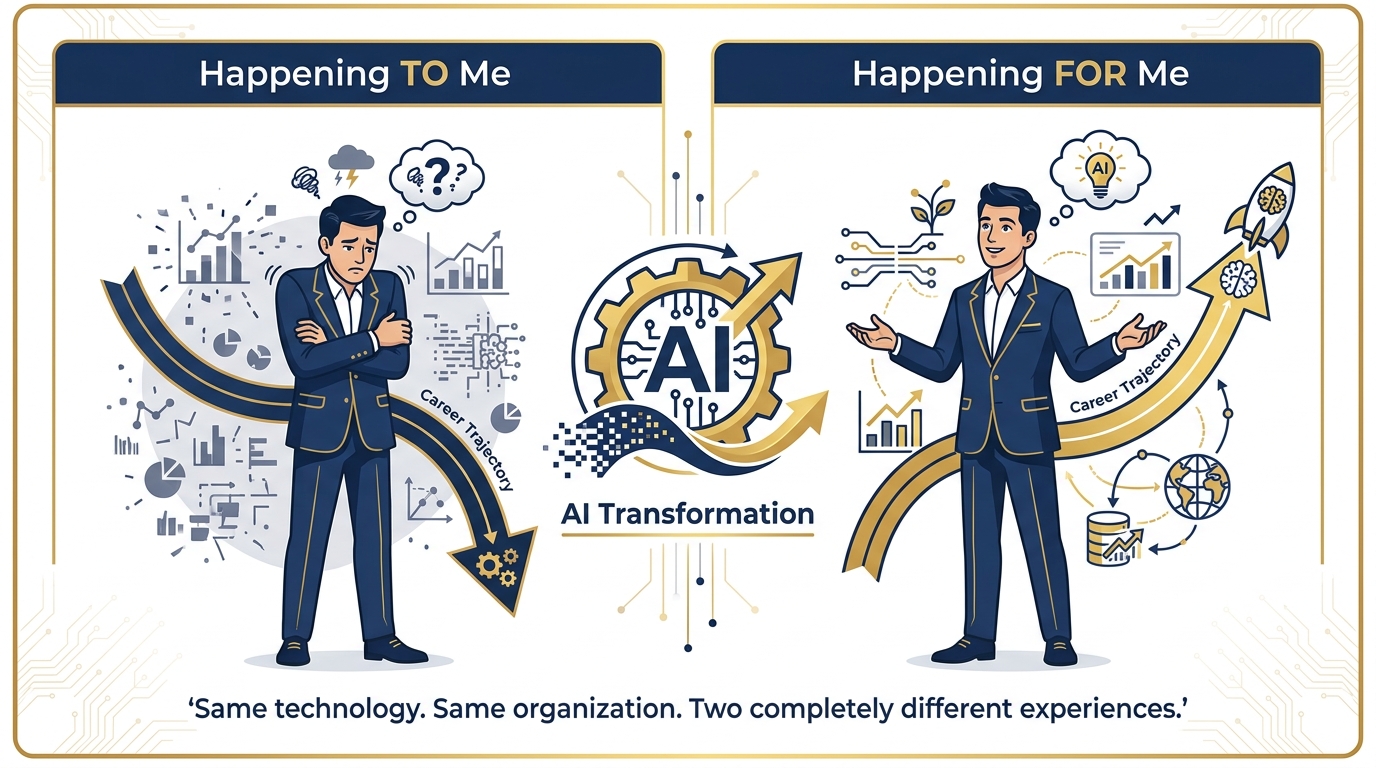

The question is whether you can feel that. Whether this is happening to you, or for you.

Which brings us to the most important single question in this entire chapter.

66. The Cognitive Journey — Five Reframes That Change Everything¶

Before we get to that question, let us arm you with the specific reframes that the professionals who successfully navigate the AI transition make — often implicitly, sometimes consciously.

These are not affirmations. They are structural shifts in how you position yourself relative to the change:

Table 1:The Five Reframes — From Resistance to Orchestration

The Old Frame | The New Frame |

|---|---|

“My organization needs to change.” | “I need to change first. I model, then I lead.” |

“I’ll delegate this to someone on my team.” | “I’ll model this myself. My team needs to see me do it.” |

“Someday, when things settle down.” | “Monday. It starts Monday.” |

“I’ve read a lot about this.” | “I’ve done this. Here’s what I learned from doing it.” |

“AI is impressive / scary.” | “AI is a tool I can direct. Let me try it on something small and real.” |

“My people need training.” | “My people need permission. And practice. I can give both.” |

The most important reframe in that table is the second one: “I’ll model this myself.”

In every successful AI adoption case study — from JPMorgan Chase to ING to the Sears internal app example — the pattern is the same. Change does not cascade downward from a policy memo. It radiates outward from a person. Usually a manager, or a senior individual contributor, or a respected team member, who started using the tool publicly, talked about what they were learning, shared their mistakes as openly as their wins, and by doing so made it safe for everyone around them to try.

If you are a leader at BankUnited, this section is specifically for you: the most powerful thing you can do for your team’s AI adoption is not to mandate training. It is to show up at the next team meeting and say, “I tried something in Copilot this week. Here’s what worked. Here’s what didn’t. Here’s what I’m going to try next.”

That is how cultures change.

77. Is This Happening To Me, or For Me?¶

Here it is. The single most important question you can ask yourself this year.

Not “Should I use AI?” — that question is already answered. Yes. Not “Am I good enough to learn this?” — that question is irrelevant. Everyone is a beginner. Not “What if I fail?” — failure here is just a prompt that didn’t work, and you try again.

The question is: Is this happening to me, or for me?

Figure 5:Same technology. Same organization. Two completely different experiences — determined entirely by the frame.

This question matters because the answer determines your posture — and your posture determines everything else. It determines whether you approach Copilot with curiosity or resignation. It determines whether you experiment or comply. It determines whether you build the Showcase project in Part IV of this book with genuine investment or performative minimum effort.

The people who answer “for me” are not naive. They are not blind to the risks or the disruptions. They see the same uncertainty that everyone sees. But they have made a deliberate choice to approach the moment as an opportunity to grow, to add value in new ways, to become more capable in their work than they were before AI existed.

That choice is entirely available to you. Right now. In the next ten seconds.

Make it.

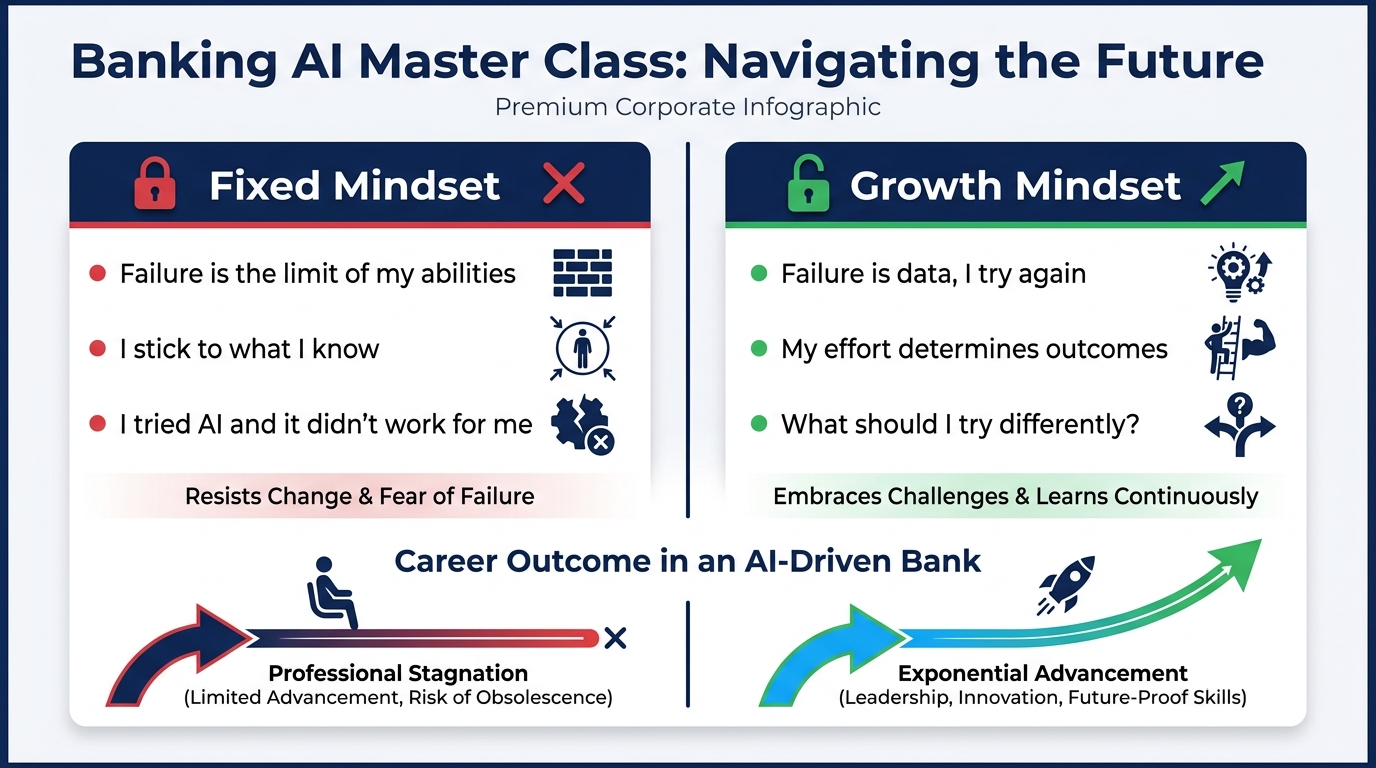

88. Fixed Mindset vs. Growth Mindset — The Carol Dweck Foundation, Updated for AI¶

You may have encountered Carol Dweck’s work on mindset before. It has become one of the most replicated and applied frameworks in educational psychology. But most presentations of it miss the dimension that is most relevant to the AI era. Let’s go to the original and then extend it forward.

Dweck’s core insight, derived from decades of research at Stanford, is this: your belief about the nature of your own abilities determines the quality of your engagement with challenge.

People with a fixed mindset believe their abilities are essentially innate — that intelligence and talent are things you either have or you don’t, and that difficulty is evidence of being at the limit of those fixed abilities. When they fail, the conclusion is: “I’m not cut out for this.”

People with a growth mindset believe their abilities are malleable — that intelligence and competence develop through effort, experimentation, and learning from failure. When they fail, the conclusion is: “I haven’t learned this yet. Let me try differently.”

The word “yet” is everything.

Figure 6:Same challenge. Same moment. Two completely different internal conversations — and two completely different outcomes.

Table 2:Fixed vs. Growth Mindset — The AI Era Edition

Fixed Mindset | Growth Mindset |

|---|---|

“Failure is the limit of my abilities.” | “Failure is data. I try again with better information.” |

“I stick to what I know. That’s where I’m safe.” | “My effort and willingness to experiment determine my outcomes.” |

“Feedback is personal. It means I’m not good enough.” | “Feedback is fuel. I am curious about what I can learn from it.” |

“AI is something that happens to people like me.” | “AI is a skill. Skills are learnable. I learn skills.” |

“I tried it and didn’t get good results. So it doesn’t work for me.” | “I tried it and didn’t get good results. What should I try differently?” |

“My value is in what I know.” | “My value is in my judgment. I can always know more by knowing how to ask.” |

Dweck’s research showed that mindset can change. It is not fixed. The act of understanding the fixed/growth distinction — of naming the fixed voice when you hear it inside your own head — is itself a growth intervention.

But the AI era adds a new dimension to Dweck’s work that she did not anticipate when she was studying students with math problems in the 1980s. The AI era teaches us not only that our abilities are not fixed, but that our role is not fixed either.

The commercial banker who believed their value was in their ability to manually build a complex credit model in Excel — that role, in that exact form, is not permanent. But the judgment, the client knowledge, the ability to ask the right questions at the right moment — that is permanent. In fact, it becomes more valuable as AI handles the mechanical parts.

The fixed mindset says: “If my role changes, I lose.”

The growth mindset says: “If my role evolves, I adapt. And my judgment — the thing that was always the real value — gets amplified.”

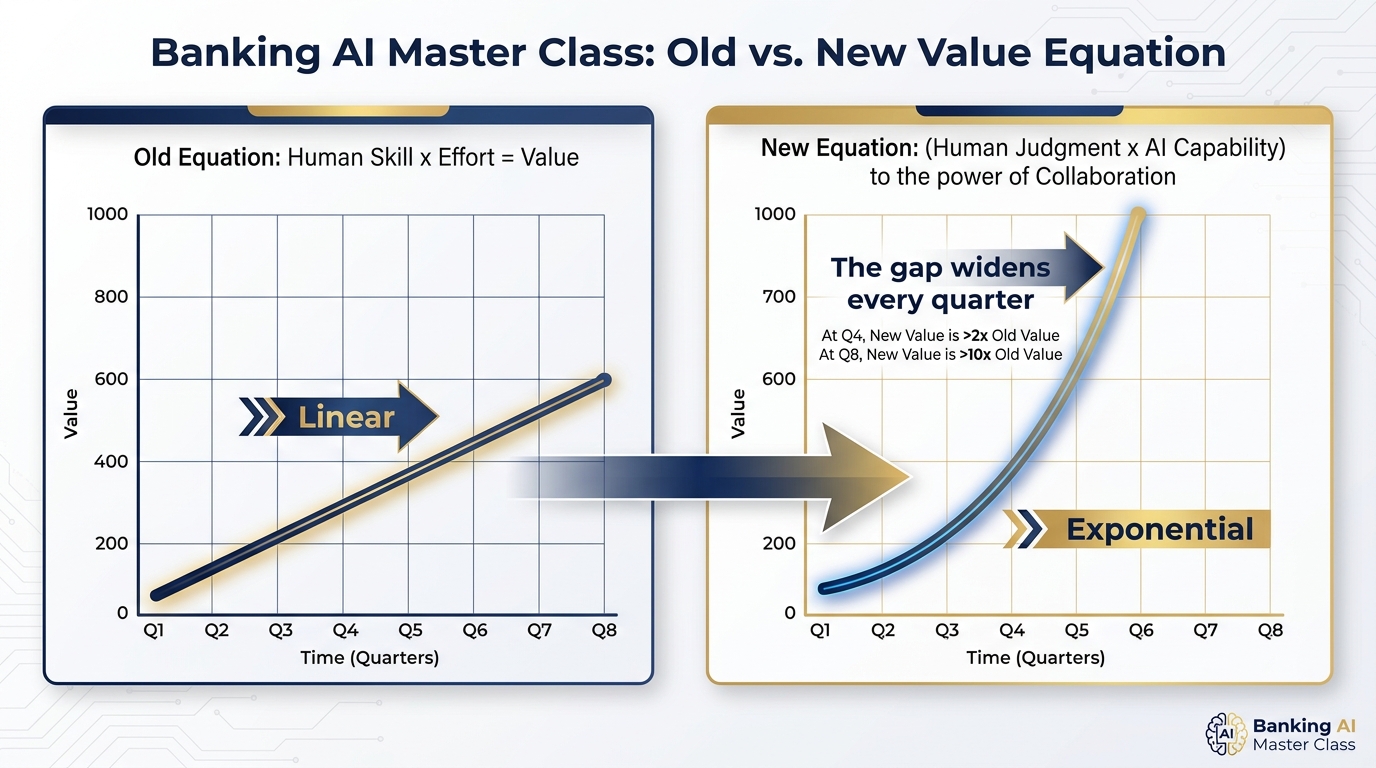

99. The New Value Equation¶

Let’s make this precise. Because the shift from fixed-role to adaptive-role has a mathematical expression, and seeing the math changes how you feel about the transition.

The old value equation:

In this model, the most productive person is the one who works hardest and has the most technical skill. Value grows linearly with input. More hours, more expertise, more value. This is the model that governed professional excellence for most of the 20th century and the early 21st century.

The new value equation:

Figure 7:Linear vs. exponential. The gap between those who operate under the old equation and those who operate under the new one grows wider every quarter.

Notice the exponent. This is not an accident. The collaboration between human judgment and AI capability is not additive — it is multiplicative. And then it compounds.

Here is what this means in practice:

A relationship manager who uses AI to research a client’s industry before a pitch, draft the agenda, synthesize the previous meeting notes, and generate three customized talking points — and then applies their own judgment to decide which points will land given what they know about this particular client’s personality and priorities — is not doing the same job with a faster computer. They are operating at a qualitatively different level. Their client experience is better. Their preparation is deeper. Their recommendations are sharper.

And the gap between that RM and one who prepares the same old way — the hard work, the manual research, the generic talking points from memory — does not close over time. It widens. Every quarter. Every year. Because the AI-augmented RM is also learning, iterating, improving their prompts, building better agents, compounding their advantage.

This is the shift from linear to exponential. And it is the entire game.

The professionals who understand this are not threatened by AI. They are energized by it. Because they see that the exponent in this equation is powered by them — by their judgment, their relationships, their institutional knowledge, their domain expertise. AI doesn’t diminish those things. It plugs them in to an exponential engine.

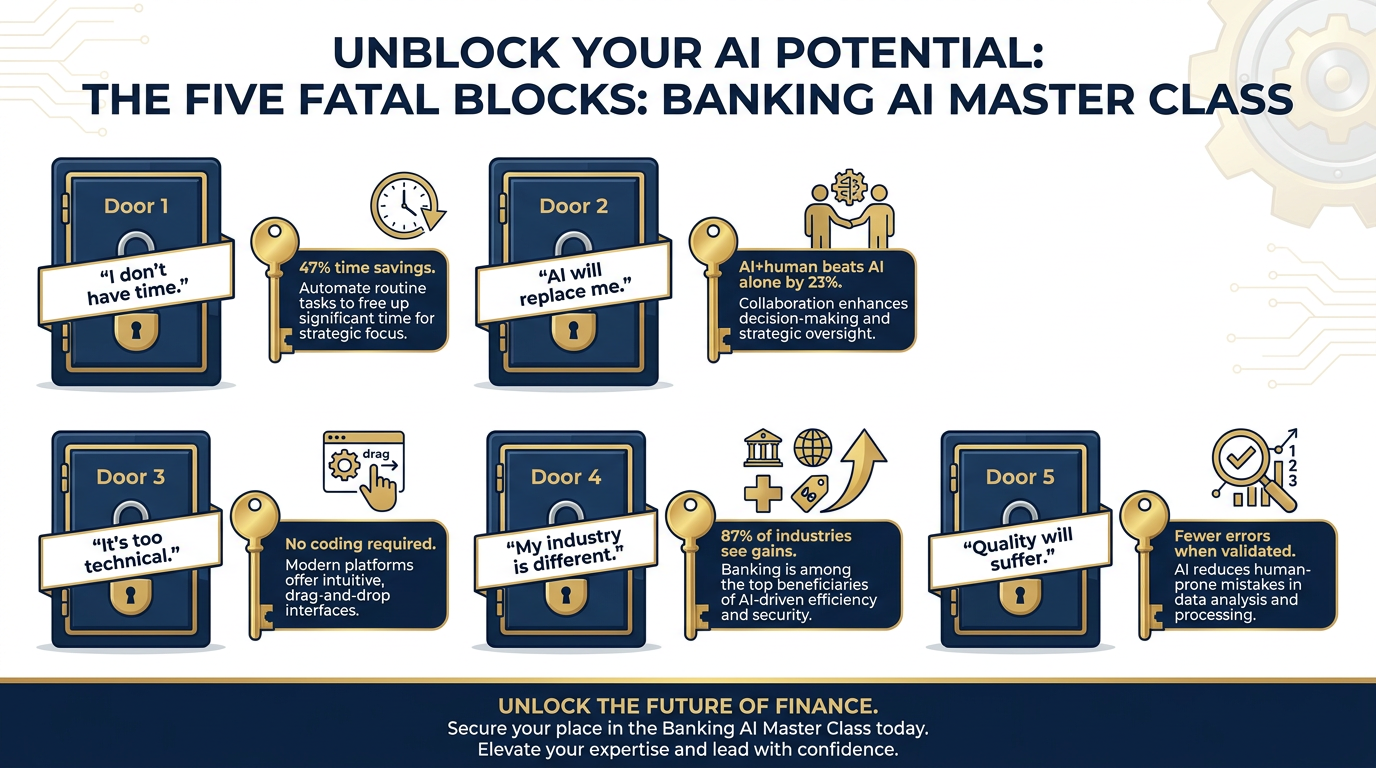

1010. The Five Fatal Blocks — Excuses That Kill Careers¶

We need to be direct now. Because while everything in this chapter has been accurate and grounded, there are five specific statements that function as career-limiting beliefs when held too tightly. You have almost certainly heard them in your organization — and perhaps felt some of them yourself.

Let’s name each one, and address it with the same rigor we would apply to a credit request with weak supporting documentation.

Figure 8:Every one of these blocks has a research-backed key. The lock is only as strong as your belief in the narrative.

Block 1: “I don’t have time.”

The reality: According to PwC, professionals who consistently apply AI to their daily workflows report recapturing an average of 47% of previously consumed task time — not someday, not in theory, but within weeks of adoption. The investment to get there is approximately 15 minutes per day — 1% of your working day — for the first 30 days.

The math: 15 minutes invested × 30 days = 7.5 hours invested. Return: potentially hundreds of hours recovered annually. No financial analyst at BankUnited would reject a 47:1 return on investment because the upfront cost was inconvenient.

The 15 minutes matters. Start there. Not with a three-day training summit. Fifteen minutes, tomorrow morning, before your first meeting.

Block 2: “AI will replace me.”

The reality: This is the most emotionally loaded block and the most thoroughly researched. An MIT study in 2024 found that AI + human collaboration outperforms AI alone by 23% — not in one scenario, but consistently across complex tasks. A separate McKinsey analysis found the same pattern: the highest-performing AI deployments are not the ones with the most automation, but the ones with the best human-AI collaboration design.

The future is not “AI instead of people.” The future is “AI-augmented people outperforming non-augmented people.” The competitive threat is not from AI. It is from other humans who adopt AI before you do.

Be the human in the loop. The loop still needs a human. Be that human.

Block 3: “It’s too technical for me.”

The reality: Modern Microsoft 365 Copilot requires zero coding knowledge, zero data science background, and zero technical certification. If you can write a coherent email, you can write a Copilot prompt. The interface is a text box. The skill is communication — which you already have.

The evidence: The fastest AI adopters in most organizations are not the technical staff. They are the professionals whose core job is language and judgment — writers, lawyers, bankers, teachers, sales professionals. The “technical” barrier to modern AI tools effectively no longer exists. The barrier is habit, not aptitude.

Block 4: “My industry is different.”

The reality: Accenture’s 2025 analysis found that 87% of industries show measurable productivity gains from AI adoption, across sample sizes large enough to be statistically meaningful. Banking specifically — financial services broadly — is among the top five sectors for measured AI impact, precisely because so much of banking work involves the exact tasks AI does best: synthesizing information, drafting communications, analyzing structured data, and preparing documentation.

Banking is not exempt from the AI era. It is one of the most targeted by it.

Block 5: “Quality will suffer.”

The reality: Stanford research on AI-assisted knowledge work found that, when used correctly, AI-assisted work shows fewer errors than purely manual work — because AI doesn’t get tired at 4 PM, doesn’t rush when under deadline pressure, and doesn’t miss steps in a checklist because it got distracted by a phone call.

The critical phrase is “when used correctly.” That means using AI for first drafts and synthesis, then applying human judgment and verification for everything that goes out the door. The verification discipline from the Safety Briefing above is not just a compliance measure — it is also what makes AI-assisted work better, not worse, than unassisted work.

1111. The Two Questions That Reorganize Your Career¶

We have arrived at the practical heart of this chapter. Because all of the frameworks above — the growth mindset, the value equation, the five blocks — exist to get you to a point where you can answer two questions honestly.

Not aspirationally. Not with what you think you should say. Honestly.

The gap between your two lists is your personal AI roadmap.

The tasks in Question One are where you invest in building AI habits — because that is work AI should be doing, and every hour you spend on it manually is an hour you are not spending on Question Two.

The capabilities in Question Two are what you protect, deepen, and double down on — because that is where your compounding advantage lives. AI will get better at Question One tasks every six months. Your Question Two capabilities get better every year you spend developing them with greater bandwidth, freed by AI handling Question One.

This is your strategy. Not the bank’s AI strategy — your personal professional strategy. And it starts with two honest lists.

1212. Productive Friction — AI as a Learning Partner, Not a Crutch¶

Here is where we introduce a concept that is central to Dr. Lee’s teaching philosophy and applies directly to how you should use AI at BankUnited.

The concept is productive friction.

There is a line — thin but critical — that separates the professionals who become more capable through AI use from those who become less capable. Both groups use the same tools. Both groups get outputs. But only one group grows.

Table 3:Unproductive AI Use vs. Productive Friction

Unproductive AI Use | Productive Friction |

|---|---|

Outsourcing thinking completely | Iterative human-AI collaboration |

Copy-pasting without evaluation | Critical evaluation of every output |

Using AI to avoid learning | Using AI to accelerate learning |

Accepting outputs blindly | Challenging AI assumptions |

Relying on AI for ethics | Applying human judgment to every recommendation |

AI as autopilot | AI as sparring partner |

The unproductive user gets the first-draft email from Copilot, changes the name at the top, and sends it. The productive friction user reads the draft, asks: “What is this missing? What would I say differently? Why did Copilot phrase it this way? What does this reveal about how I should have framed the original request?” They learn. The output gets better. Their prompting skill grows. Their judgment is sharpened by the friction of evaluation.

Here is the pedagogical truth behind this: meaningful difficulty is essential to learning. Not unnecessary difficulty — not doing things the hard way for its own sake. But the difficulty of evaluating, challenging, and improving an AI output is a genuine cognitive workout. It develops your judgment. It makes you better at your job, not just faster at your current level.

AI doesn’t remove the struggle. It changes what you struggle with. And the new struggles — evaluation, synthesis, strategic judgment, ethical reasoning — are the ones that make you more valuable, not less.

1313. In a World of Algorithms, People Still Matter Most — The 10 Human Superpowers¶

Let’s close this chapter where it should close: with an unambiguous statement of what makes you irreplaceable.

Not as consolation. As strategy.

Because understanding what AI cannot do is not just emotionally comforting — it is professionally essential. The capabilities listed below are not just things that AI is “not quite good at yet.” They are capabilities that emerge from human experience, human embodiment, human relationship, and human moral agency — things that do not have algorithmic approximations, now or on any near-term horizon.

These are the ten human superpowers. Double down on every one of them.

Figure 9:The ten capabilities that AI amplifies but cannot replicate. These are your professional franchise — protect them, develop them, and deploy them deliberately.

1. Leave fingerprints — authenticity is your edge. AI generates content. You have a voice. Your clients can feel the difference between an email that came from you and an email that came from a template, even if they can’t name it. The relationships that matter most at BankUnited are built on the feeling that someone real is paying attention. Be that someone.

2. Build trust that compounds. AI can draft the follow-up email. It cannot have dinner with the client, remember their daughter’s college graduation, show up in a difficult moment, or be the person they call on a Sunday when something goes wrong. Trust built over years of consistent, personal attention compounds in ways that no algorithm can approximate or displace.

3. Tell stories that stick. Meaning is made through narrative. The most effective RM pitch is not the one with the most complete data package — it is the one that tells the right story about what the client’s future looks like with BankUnited’s support. AI can help you draft the story. Only you can feel whether it lands.

4. Spot what others miss. You have institutional knowledge, client history, and contextual awareness that no external AI model has access to. The pattern that looks like noise in the data but strikes you as significant — because you were in the room when something similar happened three years ago — that is judgment. Guard it.

5. Read the room. Presence. Body language. The moment when you sense that the client’s stated objection is not their real objection. The knowledge that this is the moment to push and this is the moment to listen. These are not learnable from training data. They are earned through experience and sharpened through attention.

6. Ask questions AI doesn’t ask. AI asks from patterns. You ask from gaps — from the thing you noticed that doesn’t fit, from the follow-up that occurs to you because you know what this client usually doesn’t say. The best questions in banking are not predictable. They are the product of a mind that is genuinely curious and genuinely present.

7. Trust your taste. AI generates volume. Judgment curates quality. The ability to look at ten AI-generated options and know — immediately, from experience — which one is right for this client, this moment, this relationship: that is taste. It develops over years. It cannot be prompted.

8. Be the one who gets it done. Everyone will have AI. Not everyone will execute. The combination of initiative, accountability, and follow-through — the quality that makes clients and colleagues say “if I give this to her, it actually happens” — is as rare and valuable as ever. Probably more so, in a world where AI handles the easy parts and execution becomes the differentiator.

9. Empathize beyond algorithms. Be the person someone trusts more than AI. Not because you are smarter — Copilot may know more about their industry than you do. But because you are present. Because your concern is genuine. Because your relationship is real. In banking — where the most important moments are often the hardest ones — this matters more than any technical capability.

10. Protect your reputation. One AI-generated output with your name on it that turns out to be factually wrong, inappropriately worded, or damaging to a client relationship can cost years of trust. Your reputation is not a feature of your LinkedIn profile. It is the accumulated perception of thousands of interactions, over years, with the people who matter most to your career. AI assists that process. It cannot protect it. Only you can do that.

14🧪 Try This — Three Exercises in Mindset¶

These exercises are not optional. They are the chapter. The concepts above become real only when you practice them. Budget 30 minutes total.

15Chapter Close¶

The work in this chapter is invisible from the outside.

No one saw you make the mindset shift. No one knows whether you answered the two lists honestly, whether the sparring partner prompt revealed something about a decision you’re carrying, or whether the reframe from “this is happening to me” to “this is happening for me” landed somewhere real.

But here is what is true: everything that follows in this book will work better because you did this chapter. Every Word draft, every Excel model, every Teams knowledge base, every Copilot agent you build in the chapters ahead — the quality of all of it is shaped by whether you approach the tool with a growth mindset or a fixed one, with curiosity or resignation, with a willingness to experiment or a posture of minimal compliance.

Mindset is not the soft skill.

It is the operating system.

And now yours is updated.

AI Orchestrator A professional who directs and coordinates AI tools, agents, and capabilities to produce outcomes that exceed what either the human or the AI could achieve independently. The role this master class is training you for.

Productive Friction The deliberate practice of critically evaluating, challenging, and improving AI outputs — as opposed to passive acceptance — in order to develop professional judgment rather than replace it.

Identity Layer The deepest layer of AI adoption resistance, rooted in the belief that one’s professional identity is defined by specific tasks rather than judgment and relationships. Resolving the identity layer is the prerequisite to sustained AI adoption.

Permission Gap The organizational condition in which professionals want to learn and use AI but are waiting for explicit organizational permission, guidance, or cultural safety signals before they begin.

The Two Lists A reflective exercise in which a professional honestly enumerates which tasks AI will handle better (to be delegated) and which capabilities they will do better with AI (to be amplified) — producing a personal AI adoption roadmap.

Sparring Partner Mode A prompting approach in which Copilot is directed to take an adversarial, skeptical perspective on the user’s reasoning — functioning as an intellectual challenge rather than a task-completer.

Reverse Prompt A prompting technique in which the user asks Copilot to request all needed clarifications before producing any output, resulting in more contextually appropriate and useful responses.

Value Equation (New) The expression (Human Judgment × AI Capability)^Collaboration — describing how AI-augmented professional value grows exponentially rather than linearly.